“Observability is way more about software engineering than it is about operations.”

Martin Thwaites

Intricate Application — Origins

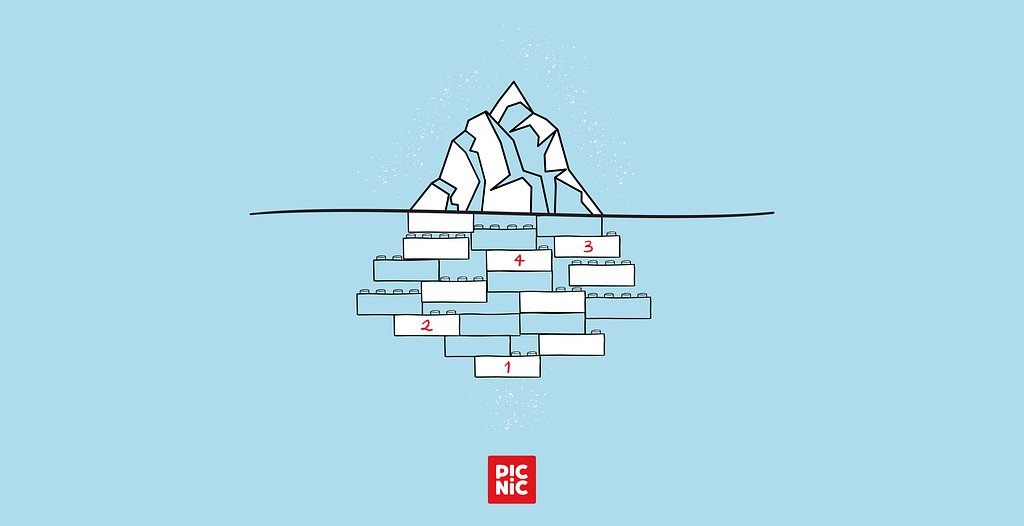

With the growing scope of systems in the modern age and the constant push to optimise manual tasks, some projects get spun up and handled as short-term efforts — but never really get treated like the business-critical components they eventually become. When further optimisation or expansion is needed, these floating projects get rehomed to a new team to take ownership.

This is exactly what happened to our team. The project had been spawned a year before the team even existed — maybe even before the domain did. As most developers know, this kind of situation leads to one of two paths: burn and raze, or go with the flow. Given a tight deadline and hardware already purchased and installed, there wasn’t much of a choice. Going with the flow, it was.

The additional complexity of adoption and maintenance came from the nature of the application itself. This was not a “regular” Java-with-SpringBoot-in-a-K8S-cluster kind of app. Not even close. This application was designed to act as a proxy for communication with hardware installed directly in the warehouse. It runs on Windows. It’s written in Python.

Pretty much everything Java engineers would adore working with.

So if you were expecting great storytelling about JRE 25… that won’t be here. But the story of how you should not handle the adoption of applications, and how you should not handle building them, will definitely be here. So stay along if you are interested

How a Small Library Upgrade Can Turn Into a Nightmare

While going with the flow might not have been the best long-term decision, it worked, and six months down the line, there was a functioning solution. Now, you might be wondering what any of this has to do with the topic of this article. Well — that’s where things started going wrong.

Another six months passed, and the application that had been slapped together was becoming outdated. Dependencies were getting deprecated. So, as good developers do, everything got updated, deprecated libraries were replaced with new, improved, and approved alternatives, and the freshly built application was deployed to the biggest warehouse first.

You see, fellow reader, this is exactly what you don’t want to do. This application had bare-bones tests and no detailed documentation on the infrastructure of the connected hardware. After an incident with significant operational impact and no clear picture of what went wrong, a plan emerged to avoid such a large-scale rollout ever again.

Back to the drawing board. Thorough tests were written, grounded in the assumption that the hardware was reliable and that the team was 100% at fault for the failure. The reasoning sounded fully legit at the time: the old version of the application was working. It was no longer upgradable or maintainable, sure — but that’s a different story. It worked.

The new tests increased code coverage from near zero to over 80%, including integration tests with mocked hardware and multiple application instances running simultaneously. This simulated the real architecture of the setup, which sometimes involves multiple Windows machines communicating with one another.

Everything looked good. Confidence was high. Time to go live.

Clean Code and Tests Are Not Nearly Enough

Learning from the previous mistakes, the plan was to deploy the update to a less critical warehouse to verify that it works.

And guess what — it failed again. The whole warehouse was at a standstill during rollback. The worst part? There was no visibility into what was going wrong, other than feedback from people on the warehouse floor.

Investigating on any deeper level meant downloading a log file from the workstation, which required even more downtime on that station and even more frustration from operations.

Time to get smart. The on-station application was hooked up to the generic logging tool, providing near-instantaneous logs while the station was running.

Test number three was ready to commence. But this time, there was a plan: update a single station with the new application version, with log publishing enabled.

And this is when things got really weird. The new version ran successfully. No errors, no missing logs — exactly how it was supposed to work. Bolstered by this newfound success, the deployment extended to another single station in a slightly more critical warehouse.

At this point, it’s worth noting that while all warehouses run on Picnic software, there are differences in hardware requirements and providers. Running the proxy application successfully in one warehouse didn’t put the team in the clear for the others without extra thorough testing.

Drawing on the experience gained so far, extra caution was the rule. This smaller blast radius should have saved the trouble of a full warehouse deployment and possible rollback. And it was the right approach — because within the first ten minutes of the application being live, it came to a screeching halt.

But what was so special about the new version? Why had the old application been running for over a year, happily fulfilling the needs of operations?

A lot of questions. Close to zero answers.

You Can Gain Understanding Only by Knowing the Whole Picture

As it turned out, upgrading the deprecated libraries and replacing some to comply with Picnic policies inadvertently removed a key functionality baked into the original application: the old version was literally crashing whenever it lost connection to the hardware. And the mitigation was deceptively simple — in the old setup, there was a third-party application whose single purpose was to restart the proxy upon termination, regardless of the reason. Think of it as the same mechanism Kubernetes uses to maintain replica counts: once a pod goes down, K8S automatically starts a new one to preserve the replica set.

Yes, you’ve read that correctly:

The old application was losing connection to the hardware, crashing, and another application was starting it back up as if nothing had happened.

But why wouldn’t this third-party application work with the new version? Simple, with the library updates we made the application more robust, catching errors and logging them instead of causing a full application failure as was the old expectation.

In reality, the old setup was obfuscating the problem of unstable connections and unreliable hardware.

The new libraries made it somewhat more complicated to implement the same kind of failover, but a retry mechanism was added. More importantly, it became clear that the hardware components in play were simply not reliable.

Later, it turned out that some of the reliability issues were so deeply ingrained into warehouse culture that operators would hard-reset devices without even attempting to diagnose the problem — and without contacting the dev team.

With an approach that no longer assumes hardware reliability, along with additional software fallback steps, the final test was ready. This time round, things went perfectly. The updated application was deployed to both stations, successfully handling hardware malfunctions and software errors alike.

So that’s the end of the story, right? Well, not quite. Next came the longer work of convincing operations that application stability could be guaranteed — and that a simple deployment of a harmless Python application wouldn’t halt an entire warehouse ever again.

Even Small Steps Can Bring You Quite Far

Earning trust back had to come first. Back to the whiteboard. It was clear that some form of transparency into the application was needed, but where to even start? The full stack — Python on Windows — was out of the ordinary for the team, which limited the available options. Not to mention that observability for something outside the Platform team’s focus was essentially non-existent.

Time to improvise. Start small, with the steps the team felt confident implementing.

The decision: build something that had been missing all this time, without anyone realising — health checks via a REST API.

It was straightforward to set up, and the health check exposed the one piece of vital information that mattered about the application’s internals: is it connected to the desired hardware?

Once implemented and successfully deployed to the test stations, the impact of this small improvement became immediately obvious:

- No more digging through logs to understand if the application was working as expected.

- At any given moment, an accurate answer could come straight from the application itself — is it healthy and able to operate?

It was only the next day that the realisation hit: this change actually opened the door to observability.

Without further ado, a handful of simple monitors were set up in Datadog, running synthetic checks against the stations and hitting the newly created health endpoints.

They immediately turned green for the test stations, since everything had already been configured there. We were almost crying from happiness.

Convincing business and operations turned out to be easier than expected. There was a huge advantage: definitive knowledge of whether what got rolled out actually worked.

And sure enough, when the full rollout finally happened across the entire warehouse, the majority of Datadog monitors turned green. The improved application, combined with proper observability, was running properly on the warehouse stations.

After the final successful rollout, it became clear that the stations still showing red were failing because of hardware problems — either network issues (an incorrect IP or an unstable cable), flaky or outright faulty hardware devices.

But this time, the fault clearly wasn’t with the proxy application. And the path to resolution was obvious.

That is the main reason developers spend — or should spend — so much time on observability: eliminating the mystery and providing clear direction for problem resolution.

And never trust or assume that hardware is stable.

Looking to make a move? Join us!

Bringing observability to the workstation was originally published in Picnic Engineering on Medium, where people are continuing the conversation by highlighting and responding to this story.

Anahita Singla

Anahita Singla