Every organization is adopting AI. Very few are structured to benefit from it.

The Adoption-to-Impact Gap

Picnic is an online-only grocery delivery company operating in the Netherlands, Germany, and France, built from the ground up as a technology company.

Two of its analysts needed to map thousands of external product articles to internal categories across three markets. They started where most companies do: evaluating a vendor platform purpose-built for the job. After testing, the results didn’t meet expectations: low accuracy, limited flexibility, no way to incorporate feedback. So they decided to build it themselves.

Using Claude Code, the two analysts built a replacement from scratch in a single afternoon: an AI-powered mapping engine with configurable algorithms, evaluation against golden test sets, output piped to S3, and a validation UI for human review, all built on existing internal infrastructure. Two production systems, built in hours, at a fraction of the cost. Over the following days, the team iterated on the matching algorithms and surpassed the vendor’s accuracy.

This story wasn’t only about AI capability. It was a story about what becomes possible when the right foundations are already in place. It also raises a question worth sitting with: what was it about this particular organization that made this feasible?

The latest industry data tells a clear story: 88% of organizations now use AI in at least one business function, but only 6% qualify as high performers: companies where AI meaningfully drives revenue, reduces cost, or reshapes how work gets done.

What separates the high performers from everyone else?

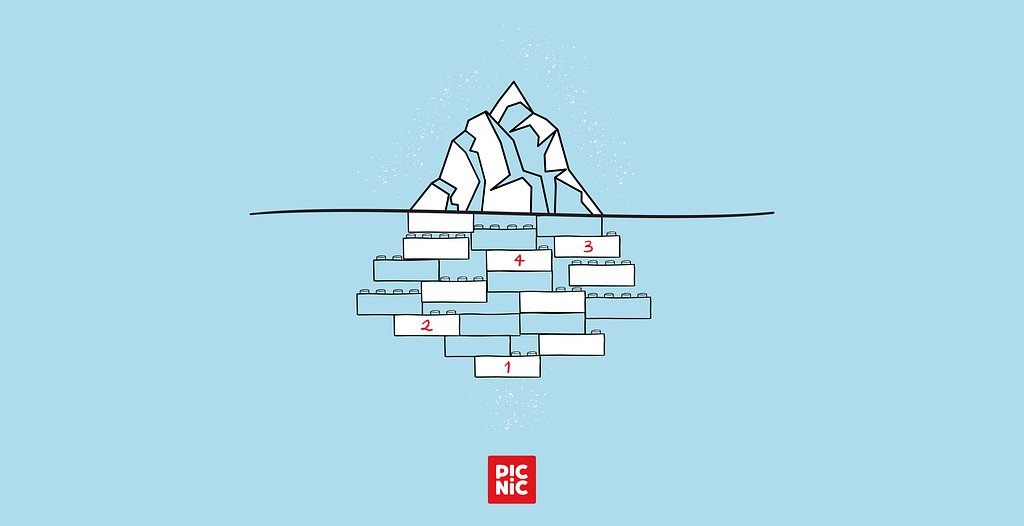

Four Things You Can’t Buy Off the Shelf

Many deploying AI in a large organization hit the same walls:

- fragmented data spread across dozens of disconnected systems

- opaque internal tools built for human operators rather than programmatic access

- an expertise gap between the people who understand business problems and the people who can build solutions

- organizational friction (committees, procurement cycles, and approval chains that prevent anything from shipping)

So what do the companies pulling ahead actually have in common? Not AI strategies per se, but design choices made well before large language models existed.

1. Structured data on everything

Not a data lake of semi-structured files and undocumented exports. A well-modeled, governed data warehouse where every operational event (every order, delivery, product change, customer interaction) flows into a single, queryable system of record.

At Picnic, this is the foundation everything else is built on. Every operational event across all markets feeds into a structured data warehouse. When someone needs to answer a business question, they don’t start by requesting a data extract and waiting two weeks. They write a query, or increasingly, describe the question in natural language and let AI write the query for them.

The difference sounds mundane until you consider what it enables. An AI model is only as good as the data it can access. When that data is structured, documented, and queryable, AI goes from “interesting demo” to “operational tool” overnight.

2. API-first systems

The second pillar is less visible but equally critical: every internal system (inventory management, product attributes, CRM, logistics, app configuration, etc.) is accessible programmatically. Not through a screen-scraping workaround, through proper APIs.

This matters because AI that can only read is only half useful. The real leverage comes when AI can also act: update a product attribute, push a promotional configuration, generate a document and file it in the right system.

At Picnic, where internal systems were built from scratch as a tech-first company, programmatic access is the default, not the exception. The result is that an analyst can build an end-to-end automation, from data query to system action, without filing a ticket with three different platform teams.

3. Technically fluent domain experts

Many enterprises enforce a hard split between “the business” and “technology.” The result is a permanent translation layer.

The alternative is to hire people who are both. Picnic’s analyst pool (the people responsible for pricing, supply chain, promotions, app search algorithms, etc.) holds quantitative Master’s degrees in fields like engineering, econometrics, physics, and mathematics. They write Python. They build data pipelines. They think in systems. The person who identifies a commercial problem is the same person who builds the solution. And the reverse holds too: the engineers and developers building systems are also deeply embedded in the business domain.

This isn’t a minor staffing preference. It eliminates the single biggest bottleneck in enterprise AI adoption: the gap between understanding a problem and being able to solve it. When the domain expert can also build, the feedback loop shrinks dramatically. The hiring bar is deliberately high, and it’s one reason this pillar is rare.

4. Builder culture

Data, APIs, and technical talent unlock possibility. Culture is what determines speed. The missing ingredient is permission to actually build and ship.

Builder culture means end-to-end ownership. The analyst who identifies a problem builds the fix, submits it for peer review, and it’s live. No six-month roadmap negotiation. That environment attracts and retains a particular kind of person: one with an entrepreneurial mindset, who sees a broken process and instinctively starts building a fix, who treats getting their ideas to production as the default, not a distant aspiration.

This doesn’t mean moving without oversight. Peer review, automated testing, staged rollouts, and access controls remain non-negotiable. A pricing change goes through the same code review as any engineering change. The point is that governance is embedded in the workflow, not imposed as a months-long gate before it.

This combination is what makes the culture self-reinforcing. When the environment encourages initiative and the people in it are wired to take it, the best internal tools emerge organically from users solving their own problems, not from top-down mandates about which platforms to adopt.

These four pillars don’t guarantee AI success. But their absence is a reliable predictor of struggle.

What This Looks Like in Practice

The article-mapping project introduced above deserves a closer look, because the details reveal how the pillars interact.

Our Category Management teams across the Netherlands, Germany, and France needed to map thousands of external articles to our internal product hierarchy. The mapping is essential for range reviews: it tells commercial teams where the gaps are, what the market offers, and what we should stock. Previously, this was extensive manual work that didn’t scale.

After the vendor platform failed to deliver, two analysts used Claude Code to build the replacement.

The system they built was an article-category mapping engine: embeddings and large language models for intelligent matching, configurable algorithms, built-in evaluation against golden test sets, output connected directly to S3, and a validation UI for human review. None of it would have been possible without all four pillars already in place.

The data was structured and standardized across markets, queryable in a single model. The systems exposed programmatic interfaces for reading source data and writing results. The analysts had the technical depth to architect matching engines and validation workflows themselves. And the organization trusted them to own the solution end-to-end.

The same pattern repeats across operational domains: analysts building end-to-end AI systems that replace platforms and brittle manual processes, not by writing better prompts, but by building modular infrastructure with proper inputs and outputs. In each case, AI provided the capability, but it was the architecture that made it deployable.

The Compound Dividend

This advantage doesn’t plateau. It accelerates.

Each AI solution an analyst builds creates infrastructure for the next one. A data pipeline built to cluster products can be reused for demand forecasting. An API integration built for one system becomes a building block for the next automation. Each tool shared with colleagues multiplies across every team that adopts it.

This is the flywheel: structured data enables AI solutions, which generate better data, which enable more sophisticated AI solutions. The analysts who automate one workflow develop skills and infrastructure that make the next automation faster. The organizational muscle for shipping AI-powered improvements strengthens with every repetition.

Meanwhile, companies without the four pillars face a cold-start problem that is brutally difficult to escape. You can’t deploy AI at scale without clean, structured data, but you can’t justify the cost of data cleanup without demonstrable AI ROI. You can’t build AI automations without API access to internal systems, but the business case for API-first architecture is hard to make without concrete use cases. You can’t close the expertise gap overnight, and you can’t retain technical talent in an organization where they can’t build anything.

The gap widens. McKinsey’s research on what they call the “agentic organization,” where AI agents operate autonomously within company systems, suggests that the winners in this transition don’t just become more efficient. They become structurally different. Leaner, flatter, faster to adapt. The companies that built the right foundations tend to compound their advantage. The companies that didn’t find the cost of catching up grows every quarter.

This is not a gap that closes with a bigger AI budget. It is an architectural advantage that deepens with time.

An Honest Audit

The statistics and examples above may feel abstract. So here is a practical exercise: four diagnostic questions, one for each pillar. The answers can tell you a lot about where your organization stands.

**Data.** If an AI agent needed to answer a cross-functional business question, say, “how did this product perform across all markets last quarter?”, could it access the data in a single query? Or would it need six system integrations, three data exports, and someone to reconcile the formats?

**Systems.** If you wanted AI to take an action in your internal systems (update a product attribute, change a configuration, file a document), could it? Or are your systems only accessible through manual interfaces that require a human clicking through screens?

**People.** If you gave your best domain expert an AI coding tool today, could they build something useful this week? Or would they need months of training before they could do anything beyond asking chatbot questions?

**Organization.** If that person built something useful, could they ship it to their team within days? Or would it need to go through a committee review, a security assessment, a procurement process, and a six-month roadmap negotiation before anyone could use it?

Four questions. Four pillars. The organizations where these answers come naturally tend to be the ones getting the most out of AI, and the difference often has less to do with the technology itself than with everything around it.

The Architecture Behind AI Impact was originally published in Picnic Engineering on Medium, where people are continuing the conversation by highlighting and responding to this story.

Eric Smith

Eric Smith