The Real-time Insights platform a.k.a. RTI

Picnic needs to operate its warehouses with high efficiency and as few interruptions as possible. Observability on the day-to-day operations is therefore key and therefore RTI was developed with the intention to provide data analysts with a robust data pipeline. This enables the creation of operational dashboards based on the company’s real-time produced data.

The platform provides a medium for data ingestion from every possible Picnic frontend and backend systems ranging from Picnic app itself to the algorithms that determine how the milkman route is decided. The platform allows sending events from RabbitMQ, Kafka and HTTP API and makes them readily available to be queried in Clickhouse. Clickhouse is an analytical database which optimizes data internally in a way such that terabytes of data can be queried within sub-seconds.

RTI delivers this operational data to Grafana within seconds using high-throughput ingestion and Clickhouse incremental materialised views.

Read more about RTI here.

Need for contextual data

However, having raw data in real time is not enough. For example, an operational event may tell us that a product with a given ID was picked from a shelf — but without additional context, we don’t know the product’s name, category, or business relevance. In practice, much of this event data we receive in RTI remains unusable until enrichment provided by our data warehouse.

For instance, consider this dashboard, which is supported by article information delivered via RTI. The system integrates recipe details with live data from the Picnic application to showcase the most popular recipe at any given moment. Without incorporating essential, slow-changing properties such as the recipe name and image which we don’t receive through an event — the dashboard would lack context, as it would only indicate the popularity of a mere recipe ID.

At Picnic, we utilise Snowflake as the platform for our data warehousing system to serve as our historical data store of all Picnic operations such as articles, fulfilment centres, deliveries, transactions, and fulfillment events. This gives us an extensive historical view to contextualize our real-time data. These two systems are complementary in nature, as RTI provides information about the present whereas our data warehouse serves to provide historical insights on this data.

Evolution of syncing process for contextual data in RTI

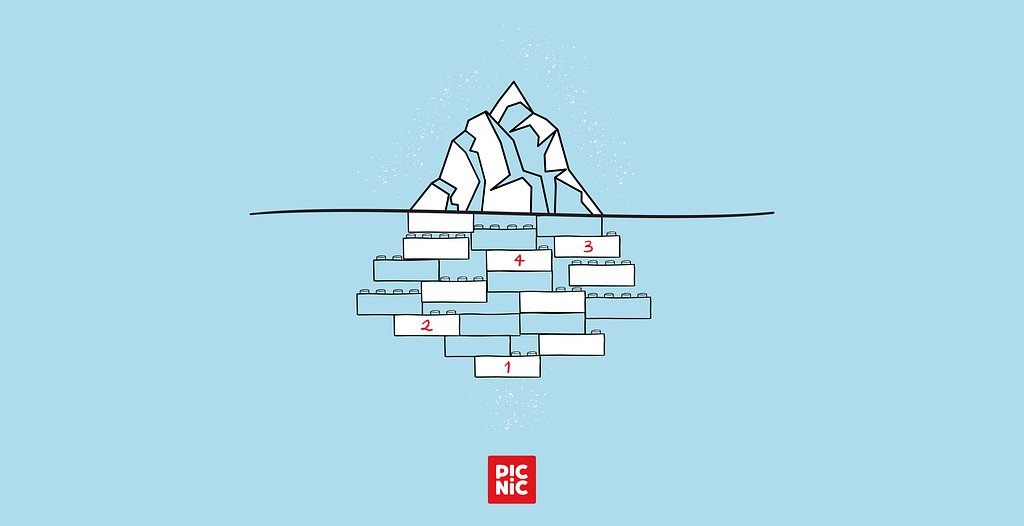

Syncing data before Iceberg

Meltano is an ETL application which provides a solution for extracting data from different sources like API, database, and other SaaS applications and loading it into the target.

In Picnic, the data was pulled using Meltano from many sources like Jira, Salesforce, and Typeform to Snowflake, hence we considered using Meltano for pulling data from Snowflake to Clickhouse. For the RTI project, we used Meltano in a way that we orchestrated it in a scheduled cron job. The benefit of using Meltano as a cron job was that it was easy to use and operate.

But it created issues such as — being able to only extract data on a schedule and pulling data consisting of millions of records stored in Snowflake required 3 hours on average. And since syncing was taking place based on a schedule, any change in data was going to be out of sync till the next scheduled run.

Motivation to use Apache Iceberg

The existing data fragmentation issues in systems like Snowflake and Clickhouse, coupled with a fragmented developer experience (caused by the need for separate declarations and mechanisms for data capture across various systems), motivated the adoption of Apache Iceberg as a shared storage layer. This layer aims to streamline data management and synchronisation of data between Snowflake and Clickhouse.

Apache Iceberg functions as a high-performing open table format which provides value for us through its ubiquity. It is swiftly becoming a standard system which multiple modern data platforms and query engines now support. The technology enables various tools to access and process shared data sets without requiring specific connections or dedicated system integration that convert the data between multiple database engines.

Improvements using Iceberg

In our case, Snowflake writes to Iceberg on S3, and both Snowflake and Clickhouse can read from it. One table, one format, multiple engines promote no data duplication. Both systems are essentially “speaking the same language”.

Writing from any dbt model

A dbt model is a SQL ( .sql) or Python ( .py) file containing a SELECT statement that transforms raw data into structured, actionable tables or views within a data warehouse. Any data received by RTI in its raw form can be transformed using a dbt model.

From the user’s perspective, creating a dbt model allows the migration path to Iceberg to be a single config change.

Before:

# model.yml

config:

materialized: table

After:

# model.yml

config:

materialized: table

table_format: iceberg

That’s it. This empowered our primary platform users, i.e., analysts to migrate from Meltano based pipelines to Iceberg without touching existing SQL or infrastructure.

What happens under the hood:

When dbt runs the model, the Snowflake adapter detects table_format:ICEBERG and generates a CREATE ICEBERG TABLEstatement instead of a standard CREATE TABLE.

Snowflake writes the data to S3 in parquet format and maintains the Iceberg metadata (manifest files, snapshots) at the specified in BASE_LOCATION S3. The external volume points to an S3 bucket that is also accessible outside Snowflake making the reading in Clickhouse possible.

Reading in RTI

The catalog for the Iceberg tables is maintained by Snowflake, and it is responsible for maintaining the metadata of the Parquet files stored in S3. We leverage Snowflake to retrieve the metadata location from Snowflake and use it for Clickhouse to read a specific Iceberg table.

Although our RTI dbt project specifies which Iceberg backed source tables are to be created in Clickhouse, the task of loading them into Clickhouse is not done the same way. Rather, auxiliary python scripts that parse the dbt manifest file determine the source tables that need to be loaded in Clickhouse by pulling the metadata location of the Iceberg tables from Snowflake, and finally create/refresh the Clickhouse source tables. These scripts are scheduled to run at an interval of 15 minutes.

An example of recipe data synced from Snowflake declared in RTI:

- name: dwh_recipes

description: "Recipe reference data, synchronized from the DWH."

meta:

type: ICEBERG

catalog: snowflake

schema: edge

order_by: RECIPE_ID

columns:

- name: RECIPE_ID

data_type: String

- name: RECIPE_SALESFORCE_ID

data_type: String

..more columns

Looking ahead

Orchestrating events to update Iceberg tables

As an analyst, I would want my data added to the RTI as soon as it is available in the source system. RTI fetches the metadata and data from Iceberg every 15 minutes which is unnecessary since some of the source data is usually refreshed not as often.

Currently, our dbt models already emit RabbitMQ events on both job completion and individual model refresh completion. The rest of this flow is not yet implemented, but the idea is to leverage these fine-grained events to trigger downstream processing as soon as data is ready instead of waiting for full pipeline completion. This would allow us to refresh Iceberg tables incrementally, based on model-level readiness of source system dbt models rather than end-of-job timing.

The polling, fixed schedule, or alternative trigger would become obsolete. The relationship between the dbt model and the data availability downstream becomes clear: the moment the job completes, an event is generated and the workflow kicks off. RTI receives notice of the event in the same way any other consumer would.

Advancements in Clickhouse for Iceberg support

Query performance: Iceberg vs. Clickhouse native storage format (MergeTree)

Querying Iceberg tables is slower in Clickhouse as compared to the MergeTree since they are being queried from S3. This is because the Iceberg table functions do not utilise Clickhouse’s internal storage format.

For RTI, that means we probably have to cache Iceberg tables into MergeTree such that Iceberg remains the source of truth and MergeTree provides the low-latency query performance we need.

Clickhouse and the Horizon catalog integration

Currently, we have to use the Python scripts that query Snowflake about the locations of Iceberg tables in S3 and which metadata version Clickhouse has to use. The integration of Clickhouse with the Horizon catalog will solve this issue:

- Clickhouse will be able to interact with Iceberg tables directly.

- The solution will become Snowflake-agnostic implying — if we have another system writing to iceberg (other than Snowflake) we can use the exact same solution: plug in a new database using the DataLakeCatalog database engine and Clickhouse will read from Iceberg independently on whether Snowflake was the writer or not.

- Analysts will be able to perform queries on Iceberg tables without defining them in dbt.

- Possibly, there will be no need for existing cron jobs anymore; we’ll be able to use refreshable materialised views instead.

Lack of insert trigger support

This is an area where Clickhouse version 25.12(current version used in Picnic) lacks support for Iceberg tables. Insertions into Iceberg tables are not recognised as inserts. Incremental materialised views using Iceberg as source are therefore not yet practical for the appropriate real-time availability of data in downstream models.

If this feature is enabled, we can potentially start exploring with loading raw data from Kafka into Iceberg, having both Snowflake and Clickhouse read from it, rather than having duplicated data propagation pipelines sending data from Kafka to Snowflake and from Kafka to Clickhouse separately.

Conclusion

The implementation of Apache Iceberg as our unified storage solution to retrieve data from Snowflake has resulted in significant advancements to our Real-time Insights platform.

The adoption of Iceberg at our organisation has brought operational efficiency improvements because it enables analysts to combine real-time data with essential contextual data.

While the current state of the Clickhouse-Iceberg integration has proven to be good enough for the adoption and effective data sharing between Snowflake and Clickhouse, we’re confident there’s more of Iceberg we can leverage as Clickhouse releases new Iceberg features.

Leveraging contextual data in real-time analytics with Apache Iceberg was originally published in Picnic Engineering on Medium, where people are continuing the conversation by highlighting and responding to this story.

Eric Smith

Eric Smith